1. Introduction

We divide this post into 6 sections. Section 2 is a qualitative description of digital signature schemes. Section 3 motivates the introduction of hash functions along with some of their desired properties. Section 4 describes a hypothetical ideal random function known as a Random Oracle. Section 5 briefly introduces the notion of Probabilistic Turing Machines that will be needed when studying the security of digital signature schemes. Sections 6 and 7 describe 2 pillars introduced by Poitncheval & Stern to prove the resilience of some digital signature schemes against a forgery attack in the Random Oracle model. In particular, Setion 6 describes a reduction model to facilitate the security analysis of signature schemes. Section 7 states and proves an important lemma known as the splitting lemma.

There is one caveat: I assume that the reader is familiar with basic probability theory, modulo arithmetic, as well as some group theoretic concepts including the notions of cyclic groups and finite fields. A concise introduction to group and field theory can be found in this post. For a more detailed treatment, the reader can refer to e.g., [3].

2. Digital Signature Schemes – A qualitative overview

A digital signature can be thought of as a digital alternative to the traditional handwritten signature. The most important property of a handwritten signature is that it is difficult to forge (at least in principle). Similarly, it is crucial that a digital signature scheme be resilient to forgery (we will formalize the notion of forgery later on). In a handwritten signature scheme, the infrastructure consists mainly of 2 elements: 1) the document or the message to be signed, and 2) the signature. In this scheme, the underlying assumption is that even though the signature is made public, it is extremely difficult (in principle) for anyone other than the signer to reproduce it and apply it on another message or document.

The core of a digital signature scheme, is a mathematical construct known as a secret or private key. This secret key is specific to a signer: 2 different signers will have 2 different secret keys. To ensure this in practice we usually provide a very large pool of allowed private keys. We then assign a unique element from this pool to each potential signer. Once selected, the private key must never be made public.

In the handwritten case, the signature depended solely on the unique ability or talent of the signer to create it. Similarly, in the digital case the signature will depend in part on the private key (which we can simplistically think of as the digital counterpart to the handwritten signer’s unique ability). But the digital signature will usually depend on additional information including some random data as well as some available public information. The important design criteria in a digital signature construct are two-fold:

- The digital signature must conceal the private key of the signer (i.e., no one can realistically recover the private key from the signature), and

- Anybody can verify the validity of the signature (e.g., that it originated from a particular signer) using only relevant public information.

The public information that allows external parties to verify the validity of a digital signature is known as a public key. Clearly, the public key must be related to the private key, but it must not divulge any information about it. In general, suppose that private keys are elements of some appropriate finite field, and public keys elements of some appropriate cyclic group ![]() with a generator

with a generator ![]() , and on which the Discrete Logarithm (DL) problem is thought to be hard. One could define the public key

, and on which the Discrete Logarithm (DL) problem is thought to be hard. One could define the public key ![]() associated with the private key

associated with the private key ![]() to be the element of

to be the element of ![]() given by

given by ![]() . While it is computationally easy to calculate

. While it is computationally easy to calculate ![]() from

from ![]() , the reverse is computationally hard. This is because solving for the DL in base

, the reverse is computationally hard. This is because solving for the DL in base ![]() of an element of this cyclic group is thought to be intractable. The hardness of the DL problem provides assurance that

of an element of this cyclic group is thought to be intractable. The hardness of the DL problem provides assurance that ![]() remains protected.

remains protected.

The above description allows us to define a digital signature scheme the same way as in [4] :“A user’s signature on a message ![]() is a string which depends on

is a string which depends on ![]() , on public and secret data specific to the user, and -possibly- on randomly chosen data, in such a way that anyone can check the validity of the signature by using public data only. The user’s public data are called the public key, whereas his secret data are called the secret key”. More formaly, a generic digital signature scheme is defined as a set of 3 algorithms:

, on public and secret data specific to the user, and -possibly- on randomly chosen data, in such a way that anyone can check the validity of the signature by using public data only. The user’s public data are called the public key, whereas his secret data are called the secret key”. More formaly, a generic digital signature scheme is defined as a set of 3 algorithms:

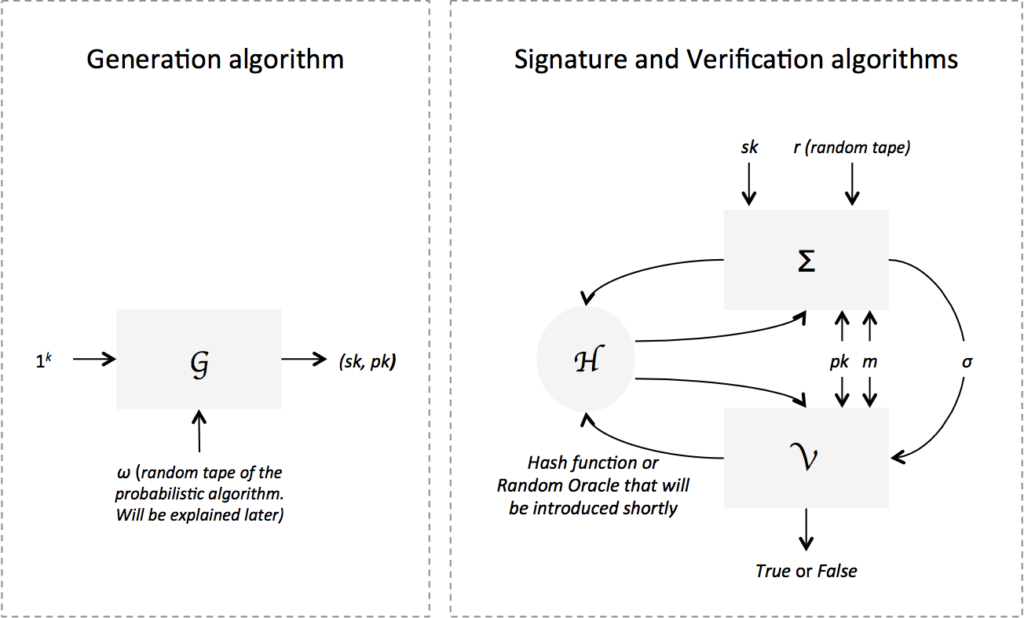

- The key generation algorithm

. It takes as input a security parameter

. It takes as input a security parameter  that ensures cryptographic resilience according to some defined metrics (e.g.,

that ensures cryptographic resilience according to some defined metrics (e.g.,  could represent the length in bits of acceptable keys and so the lengthier they are the more resilient the system is). We write

could represent the length in bits of acceptable keys and so the lengthier they are the more resilient the system is). We write  to denote the security parameter input. The algorithm outputs a pair

to denote the security parameter input. The algorithm outputs a pair  of matching public and secret keys. The key generation algorithm is random as opposed to deterministic.

of matching public and secret keys. The key generation algorithm is random as opposed to deterministic. - The signing algorithm

. Its input consists of the message

. Its input consists of the message  to be signed along with a key pair

to be signed along with a key pair  generated by

generated by  . It outputs a digital signature

. It outputs a digital signature  on message

on message  signed by the user with private key

signed by the user with private key  . As we will see when we look at specific examples of signing algorithms, the process relies on the generation of random data and is generally treated as a probabilistic algorithm as opposed to a deterministic one.

. As we will see when we look at specific examples of signing algorithms, the process relies on the generation of random data and is generally treated as a probabilistic algorithm as opposed to a deterministic one. - The verification algorithm

. Its input consists of a signature

. Its input consists of a signature  , a message

, a message  , and a public key

, and a public key  . The algorithm verifies if the signature is a valid one (i.e., generated by a user who has knowledge of the private key

. The algorithm verifies if the signature is a valid one (i.e., generated by a user who has knowledge of the private key  associated with

associated with  ).

).  is a boolean function that returns True if the signature is valid and False otherwise. It is a deterministic algorithm as opposed to probabilistic.

is a boolean function that returns True if the signature is valid and False otherwise. It is a deterministic algorithm as opposed to probabilistic.

An important security concern in any digital signature scheme is how to prevent an adversary with no knowledge of a signer’s private key from forging her signature. We define 2 types of attacks that were originally formalized in [1]. 1) The “No-Message” Attack: The hypothetical attacker or adversary can only have access to the public key of the signer. Moreover, the adversary doesn’t have access to any signed message. 2) The “Known-Message” Attacks: The hypothetical attacker or adversary has access to the public key of the signer. In addition, the adversary can have access to a subset of message signature pairs ![]() . There are 4 scenarios that we consider:

. There are 4 scenarios that we consider:

- Plain Known-Message Attack: The hypothetical adversary is granted access to a pre-defined set of message signature pairs

not chosen by him.

not chosen by him. - Generic Chosen-Message Attack: The hypothetical adversary can choose the messages that he would like to get signatures on. However, his choice must be done prior to accessing the public key of the signer. The term generic underscores the fact that the choice of messages is decoupled from any knowledge about the signer.

- Oriented Chosen-Message Attack: Similar to the Generic Chosen-Message Attack case except that the adversary can choose his messages after he learns the public key of the signer.

- Adaptively Chosen-Message Attack: This is the strongest of all types of attacks. After the adversary learns the signer’s public key, he can ask for any message to be signed. Moreover, he can adapt his queries according to previously received

pairs and so is not required to choose messages altogether at once.

pairs and so is not required to choose messages altogether at once.

In addition to the above modi operandi of a hypothetical adversary, a forgery attack can be of 3 natures:

- Total break forgery: Consists of an adversarial algorithm that learns and discloses the secret key of the signer. Clearly, this is the most dangerous type of forgeries.

- Universal forgery: Consists of an adversarial algorithm that can issue a valid signature on any message.

- Existential forgery: Consists of an adversarial algorithm that can issue a valid signature on at least one message.

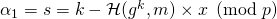

Whenever we conduct security analysis of digital signature schemes, we endeavor to demonstrate the resilience of the scheme against existential forgery under adaptively chosen-message attack. By resilience, we mean that the likelihood of finding an adversarial algorithm that can run in polynomial time and succeed in this experiment is negligible. Going forward, we refer to this experiment as EFACM.

3. Hash functions – Motivation and some properties

Textbook RSA vs. Hashed RSA. To motivate hash functions we will rely on [2] and look at a particular signature scheme known as RSA (the acronym represents the initials of the authors Ron Rivest, Adi Shamir, and Leonard Adleman). RSA’s ability to hide a signer’s private key relies on the hardness of the factoring problem. This is different from the hardness of the DL problem introduced earlier. The factoring problem states that it is computationally hard (i.e., no one has yet found an appropriate algorithm that executes in polynomial time) to find the prime factors of a very large integer. We show that the RSA signature scheme in its original form (also known as textbook RSA) satisfies the first desired property of a signature scheme as described earlier, but fails to guarantee the second. This failure will be addressed by introducing the mathematical construct of hash functions.

The textbook RSA signature scheme is a set of 3 algorithms:

- The key generation algorithm

generates the public and private keys of a given user/signer by doing the following:

generates the public and private keys of a given user/signer by doing the following:

- Choose 2 very large prime numbers, say

and

and  with

with  .

. - Let

(note that given

(note that given  and

and  it is easy to compute

it is easy to compute  . However, given

. However, given  , it is very difficult to find

, it is very difficult to find  and

and  . This is the factoring problem).

. This is the factoring problem). - Find

‘s totient value

‘s totient value  . This value is equal to the number of integers

. This value is equal to the number of integers  that are coprime to

that are coprime to  . If

. If  is prime, one can easily see that

is prime, one can easily see that  . Moreover, when

. Moreover, when  and

and  are co-prime, we have

are co-prime, we have  . Hence we can write

. Hence we can write  (because

(because  and

and  are distinct primes and hence are co-prime). This can be further simplified to give

are distinct primes and hence are co-prime). This can be further simplified to give  (because

(because  and

and  are prime).

are prime). - Choose an integer

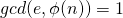

such that the greatest common divisor

such that the greatest common divisor  . Define

. Define  as the public key of the user.

as the public key of the user. - Choose an integer

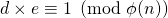

such that

such that  . In other terms

. In other terms  for some integer scalar

for some integer scalar  . Note that even if

. Note that even if  were known, it is computationally hard to find

were known, it is computationally hard to find  since this requires calculating

since this requires calculating  and

and  which in turn, requires solving the prime factorization problem. We then define

which in turn, requires solving the prime factorization problem. We then define  as the private key of the user.

as the private key of the user.

- Choose 2 very large prime numbers, say

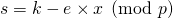

- The signing algorithm

signs a message

signs a message  with a user’s private key

with a user’s private key  . In this textbook RSA scheme we don’t use any additional public information or any randomly generated data to construct the signature. As such, the signing algorithm is deterministic. The message

. In this textbook RSA scheme we don’t use any additional public information or any randomly generated data to construct the signature. As such, the signing algorithm is deterministic. The message  in this scheme is assumed to be an element of

in this scheme is assumed to be an element of  . The algorithm outputs a signature

. The algorithm outputs a signature  . The space of allowed messages is restricted to non-zero integers in this case. What if we want to use this scheme to sign other formats of messages? We will see shortly that this is one of the flexibilities that a hash function introduces.

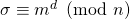

. The space of allowed messages is restricted to non-zero integers in this case. What if we want to use this scheme to sign other formats of messages? We will see shortly that this is one of the flexibilities that a hash function introduces. - The verification algorithm

verifies if a given signature

verifies if a given signature  on message

on message  and public key

and public key  is valid or not by checking whether

is valid or not by checking whether  is equal to

is equal to  . If the equality holds then the algorithm returns True. Otherwise, it returns False and the signature is rejected.

. If the equality holds then the algorithm returns True. Otherwise, it returns False and the signature is rejected.

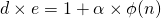

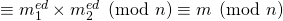

One can easily verify that any signature generated by ![]() will pass the verification test. Indeed,

will pass the verification test. Indeed, ![]() outputs a signature of the form

outputs a signature of the form ![]() for a certain private key

for a certain private key ![]() generated by

generated by ![]() . This implies that

. This implies that ![]() . And since

. And since ![]() guarantees that

guarantees that ![]() , one concludes that

, one concludes that ![]() . If all signatures generated by the signing algorithm of a given scheme pass the verification test, we say that the scheme is correct. Cleary, textbook RSA is correct.

. If all signatures generated by the signing algorithm of a given scheme pass the verification test, we say that the scheme is correct. Cleary, textbook RSA is correct.

An important question to ask is whether our signature scheme is resilient against forgery. We will see now that textbook RSA is susceptible to forgery. Later, we remedy the situation by introducing a hash function. Let’s look at 2 types of forgeries against textbook RSA:

- The No-Message Attack: Recall that in this type of attack, an adversary has access to the signer’s public key

but not to any valid signature on a message. Consider the following: the adversary chooses a random element of

but not to any valid signature on a message. Consider the following: the adversary chooses a random element of  and calls it

and calls it  . He then forms a message

. He then forms a message  and outputs a pair

and outputs a pair  as a signature on message

as a signature on message  . Note that the verification algorithm

. Note that the verification algorithm  will compute

will compute  . But this is equal to

. But this is equal to  as constructed by the adversary. Hence the verifier recognizes

as constructed by the adversary. Hence the verifier recognizes  as a valid signature. The adversary succesfully created a forgery, highlighting the security weakness of this scheme.

as a valid signature. The adversary succesfully created a forgery, highlighting the security weakness of this scheme.

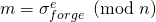

- The Arbitrary Message Attack: Now suppose that the hypothetical adversary decides to forge a signature of a user with public key

on an arbitrary message

on an arbitrary message  . In the No-Message attack, the forgery was not conducted on an arbitrary message but rather, the message was automatically determined by the procedure. In this case, we allow the adversary to obtain valid signatures from the real signer on 2 messages of his choosing. Consider the following procedure: The adversary chooses a random message

. In the No-Message attack, the forgery was not conducted on an arbitrary message but rather, the message was automatically determined by the procedure. In this case, we allow the adversary to obtain valid signatures from the real signer on 2 messages of his choosing. Consider the following procedure: The adversary chooses a random message  and computes

and computes  . The adversary asks the real signer to issue valid signatures

. The adversary asks the real signer to issue valid signatures  on message

on message  and

and  on

on  . He calculates

. He calculates  and issues

and issues  .

.  then computes:

then computes:

The verifier will recognize

as a valid signature. In this case too, the adversary succesfully created a forgery, highlighting the security weakness of this scheme.

as a valid signature. In this case too, the adversary succesfully created a forgery, highlighting the security weakness of this scheme.

One way of addressing the forgery cases described earlier is by introducing a map ![]() : (message domain)

: (message domain) ![]() and apply it to the message to be signed. The signing algorithm

and apply it to the message to be signed. The signing algorithm ![]() would then compute the signature as follows:

would then compute the signature as follows: ![]() . The verification algorithm would simply check if

. The verification algorithm would simply check if ![]() is equal to

is equal to ![]() . This modified scheme is refered to as the hashed RSA scheme.

. This modified scheme is refered to as the hashed RSA scheme.

What are some of the characteristics of ![]() that will minimize the risk of forgery? We list 2 of them:

that will minimize the risk of forgery? We list 2 of them:

should exhibit collision resistance: That means that it should be practically impossible to find 2 distinct messages

should exhibit collision resistance: That means that it should be practically impossible to find 2 distinct messages  and

and  such that

such that  . Suppose this were not the case, then if

. Suppose this were not the case, then if  is a valid signature, then the hypothetical adversary could find a message

is a valid signature, then the hypothetical adversary could find a message  such that

such that  and so will successfuly issue a forged signature

and so will successfuly issue a forged signature  .

. should exhibit pre-image resistance: That means that it should be practically impossible to invert

should exhibit pre-image resistance: That means that it should be practically impossible to invert  and find

and find  such that

such that  for any given

for any given  . We will now see how this property could have prevented the 2 types of forgery attacks in the textbook RSA scheme:

. We will now see how this property could have prevented the 2 types of forgery attacks in the textbook RSA scheme:

- The No Message Attack case: The forger chooses an arbitrary

as we saw earlier, and calculates

as we saw earlier, and calculates  . But now, for

. But now, for  to be a succesfull forgery, the adversary still needs to find a message

to be a succesfull forgery, the adversary still needs to find a message  such that

such that  . And so if

. And so if  is difficult to invert, this type of forgery will fail with overwhelming probability.

is difficult to invert, this type of forgery will fail with overwhelming probability. - The Arbitrary Message Attack case: Following the same logic as in the previous attack, an adversary trying to forge a signature on a message

in the hashed RSA scheme must find

in the hashed RSA scheme must find  s.t.

s.t.  in order to be successful. If

in order to be successful. If  is difficult to invert, then this forgery will likely fail with overwhelming probability.

is difficult to invert, then this forgery will likely fail with overwhelming probability.

- The No Message Attack case: The forger chooses an arbitrary

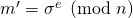

To summarize, we motivated the introduction of a map ![]() in a digital signature scheme such that

in a digital signature scheme such that ![]() exhibits at least the following 3 characteristics:

exhibits at least the following 3 characteristics:

- The domain of

consists of arbitrary messages of various lengths. We represent the domain by

consists of arbitrary messages of various lengths. We represent the domain by  , and get

, and get  .

.  denotes binary strings of length

denotes binary strings of length  . The notation

. The notation  denotes binary strings of arbitrary length.

denotes binary strings of arbitrary length.  is collision resistant.

is collision resistant. is pre-image resistant.

is pre-image resistant.

Such an ![]() is refered to as a hash function and is likely to limit the cases of successful forgeries. One can also conclude that for all practical purposes, the output of such an

is refered to as a hash function and is likely to limit the cases of successful forgeries. One can also conclude that for all practical purposes, the output of such an ![]() can be thought of as random-looking.

can be thought of as random-looking.

Hash functions in Non-Interactive Zero Knowledge schemes. In 1986, a new paradigm for signature schemes was devised. It consisted in creating an interaction between the signer and the verifier before the verification of the signature took place. The purpose of this interaction was to allow the signer to demonstrate to the verifier that she knows the private key associated with a certain public key without revealing her private key. The idea of demonstrating that you own a piece of information or knowledge without revealing it forms the basis of a set of cryptographic protocols known as zero-knowledge identification protocols. As an example we look at the Schnorr digital signature scheme:

- The key generation algorithm

: Consider a large finite field of prime order

: Consider a large finite field of prime order  . Let

. Let  be a generator of its associated multiplicative subgroup. Choose a random private key

be a generator of its associated multiplicative subgroup. Choose a random private key  and define its associated public key

and define its associated public key  . Note that since the Discrete Logarithm problem is intractable on the multiplicative subgroup

. Note that since the Discrete Logarithm problem is intractable on the multiplicative subgroup  , it will be hard for any external party to solve for

, it will be hard for any external party to solve for  given

given  .

. - The signing algorithm

signs a message

signs a message  as follows:

as follows:

- It randomly chooses a value

known as a commitment and computes

known as a commitment and computes  .

. - It sends

to the verifier

to the verifier  who then randomly chooses a value

who then randomly chooses a value  known as a challenge.

known as a challenge. - The verifier

then sends

then sends  back to

back to  who now computes

who now computes  .

.  issues a signature on message

issues a signature on message  in the form of a triplet

in the form of a triplet  .

.

- It randomly chooses a value

- The verfication algorithm

verifies if

verifies if  is equal to

is equal to  . If the equality holds then the algorithm returns True. Otherwise, it returns False and the signature is rejected.

. If the equality holds then the algorithm returns True. Otherwise, it returns False and the signature is rejected.

One can quickly verify the correctness of the Schnorr signature scheme. Indeed, let ![]() be a key pair generated by

be a key pair generated by ![]() and let

and let ![]() be a signature generated by

be a signature generated by ![]() using

using ![]() . Then

. Then ![]() .

.

It was shown by Fiat and Shamir that interactive proofs of the kind described in the Schnorr scheme above could be replaced by a non-interactive equivalent. The way to do so is to introduce a truly random function that can generate the challeng ![]() originally created by

originally created by ![]() in the interactive case. Although hash functions are not truly random, they could play that role in practice. The Fiat-Shamir transformation paved the way to what is know as Non-Interactive Zero Knowledge Signature Schemes or NIZK for short.

in the interactive case. Although hash functions are not truly random, they could play that role in practice. The Fiat-Shamir transformation paved the way to what is know as Non-Interactive Zero Knowledge Signature Schemes or NIZK for short.

4. The Random Oracle model

It turns out that proving the security (e.g., resilience against forgeability) whenever hash functions are involved is not that straightforward. A setting with a hash function is known as a standard model. Rather than opting for no proof at all, a new idealized setting was devised in which cryptographic hash functions are replaced with a utopian counterpart known as a random oracle or RO for short. In this environment, it becomes easier to prove the security of various classes of signature schemes. Obviously, a secure scheme in the RO model does not necessarily imply security in the standard model where a pre-defined hash function such as SHA-256 is used. Nevertheless, an RO proof allows us to gain a level of confidence higher than if we had no proof at all. There is still an on-going debate regarding the merits of security proofs in the RO model. In what follows, we describe the RO setting and highlight how it differs from the standard model. Our approach follows that of [2].

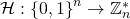

We can think of the RO as a black box that takes in binary strings of a certain length and outputs binary strings of a possibly different length. No one knows how the box works. Any user can send an input ![]() to RO and receive an output

to RO and receive an output ![]() in return. We say that we query RO with input

in return. We say that we query RO with input ![]() . Moreover, RO needs to be consistent. That means that if query

. Moreover, RO needs to be consistent. That means that if query ![]() resulted in output

resulted in output ![]() , any subsequent query to RO with the same input

, any subsequent query to RO with the same input ![]() must always result in the same output

must always result in the same output ![]() .

.

Another way of thinking of the box is as defining a function ![]() not known to anyone in advance, whose output on a certain query is revealed only when the query is executed. Since

not known to anyone in advance, whose output on a certain query is revealed only when the query is executed. Since ![]() is not known in advance, it can be considered as a random function. We can interpret the function

is not known in advance, it can be considered as a random function. We can interpret the function ![]() in 2 equivalent ways:

in 2 equivalent ways:

- Say

maps

maps  -long bit strings

-long bit strings  to

to  -long bit strings

-long bit strings  for some appropriate function

for some appropriate function  . One way of representing

. One way of representing  is as a very long string where the first

is as a very long string where the first  bits represent

bits represent  , the second

, the second  bits represent

bits represent  , and so on, where the input is increase by 1 bit every time. Hence, we can think of

, and so on, where the input is increase by 1 bit every time. Hence, we can think of  as a

as a  -bit string. Conversely, any

-bit string. Conversely, any  -bit string can be thought of as a certain mapping

-bit string can be thought of as a certain mapping  . We can see that there is a total of

. We can see that there is a total of  different

different  mappings that have the desired input and output lengths. Choosing

mappings that have the desired input and output lengths. Choosing  randomly is tantamount to uniformly picking one map among the

randomly is tantamount to uniformly picking one map among the  different possibilities.

different possibilities. - Note that randomly choosing

as per the procedure described above and then storing it somewhere is not realistic. This is due to its sheer size which is exponential in the number of bits. We need to think about what it means for

as per the procedure described above and then storing it somewhere is not realistic. This is due to its sheer size which is exponential in the number of bits. We need to think about what it means for  to be random using a more pragmatic but equivalent way. We imagine that the black box described earlier generates random outputs for

to be random using a more pragmatic but equivalent way. We imagine that the black box described earlier generates random outputs for  on the fly whenever queried. The box would also keep a table of pairs of the form

on the fly whenever queried. The box would also keep a table of pairs of the form  for all the inputs

for all the inputs  that have been queried so far, along with their corresponding outputs

that have been queried so far, along with their corresponding outputs  . If a new query is executed, the box checks if it exists in the table. If not, then it randomly generates an output and adds the pair to the table.

. If a new query is executed, the box checks if it exists in the table. If not, then it randomly generates an output and adds the pair to the table.

We don’t know of the existence of any RO in real life. However the RO model provides a methodology to design and validate cryptographic schemes in the following sense:

- Step 1: Design a scheme and prove its security in the RO model.

- Step 2: Before implementing the scheme in a practical context, instantiate the RO random function

with a cryptographic hash function

with a cryptographic hash function  (e.g., SHA-256). Whenever the scheme queries

(e.g., SHA-256). Whenever the scheme queries  on input

on input  , the practical implementation computes

, the practical implementation computes  instead.

instead.

Note that the term instantiate used above is justified: a practical hash function is an instance in the sense that it is a well defined function and so is not random (as is the case with the RO function). However, one hopes that the hash function behaves well enough so as to maintain the security of the scheme proven under the RO model. But this hope is not scientifically grounded. We mention verbatim the warning of [2]: “a proof of security for a scheme in the RO model should be viewed as providing evidence that the scheme has no inherent design flaws, but should not be taken as a rigorous proof that any real-world instantiation of the scheme is secure”.

We observe that the RO random function exhibits the desired properties of hash functions we highlighted earlier. In particular pre-image resistance, and collision resistance

Pre-image resistance: We show that RO behaves in a way similar to functions that are pre-image resistant (also known as one-way functions). The subtelty lies in the choice of the verb behave, because RO is not a fixed function but rather randomly chosen and not known in advance. So what we will show is that given a polynomial-time probabilistic algorithm ![]() (we will discuss this in a bit more details in the Polynomial-Time Turing machine section to follow) that runs the following experiment:

(we will discuss this in a bit more details in the Polynomial-Time Turing machine section to follow) that runs the following experiment:

- A random

is chosen as described earlier.

is chosen as described earlier. - A random input

is selected.

is selected.  is evaluated and assigned to

is evaluated and assigned to  .

. takes

takes  as an input and outputs

as an input and outputs  such that

such that  .

.

then the probability of success of ![]() is negligible. To see why this is the case, we note that

is negligible. To see why this is the case, we note that ![]() succeeds if and only if one of the following 2 situations occur:

succeeds if and only if one of the following 2 situations occur:

chooses

chooses

chooses

chooses  , but RO assigns to

, but RO assigns to  the same value as

the same value as

Suppose that ![]() can make a total of

can make a total of ![]() queries to RO, where

queries to RO, where ![]() is polynomial in the security parameter

is polynomial in the security parameter ![]() . The security parameter

. The security parameter ![]() was briefly introduced earlier in the context of digital signature scheme generation algorithm. It refers to a design parameter such as the length of the output of a hash function, or the length of key pairs in bits. In our case, we restrict

was briefly introduced earlier in the context of digital signature scheme generation algorithm. It refers to a design parameter such as the length of the output of a hash function, or the length of key pairs in bits. In our case, we restrict ![]() to be

to be ![]() . Since by construction

. Since by construction ![]() is independent of

is independent of ![]() , then

, then ![]() knows nothing about

knows nothing about ![]() although it knows

although it knows ![]() . And so the probability that

. And so the probability that ![]() chooses a value

chooses a value ![]() that is equal to

that is equal to ![]() (i.e., situation 1 from above) is equal to:

(i.e., situation 1 from above) is equal to:

For situation 2 to materialize, RO must randomly pick a value ![]() that is equal to

that is equal to ![]() and such that

and such that ![]() . The probability of this happening is equal to:

. The probability of this happening is equal to:

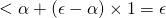

We then conclude that:

Collision resistance: We show that RO behaves in a way similar to functions that are collision-resistant. By that we mean that for a given polynomial-time probabilistic adversary ![]() that runs the following experiment:

that runs the following experiment:

- A random

is chosen as described earlier.

is chosen as described earlier.  outputs

outputs  and

and  such that

such that  and such that

and such that  .

.

the probability of success of ![]() is negligible. To see why, assume witout loss of generality that

is negligible. To see why, assume witout loss of generality that ![]() outputs values

outputs values ![]() that were queried before the maximum number of

that were queried before the maximum number of ![]() queries is attained. Moreover, assume that an

queries is attained. Moreover, assume that an ![]() is never queried more than once. Since the output of

is never queried more than once. Since the output of ![]() is randomly generated for every query (since no query is repeated more than once), we get:

is randomly generated for every query (since no query is repeated more than once), we get:

Hence we conclude that:

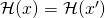

Before concluding this section, we highlight the basic idea behind conducting security proofs in the RO model. We contrast it with security proofs in the standard model [2].

The important thing to note in the above comparison is that in the standard model, ![]() is fixed and hence is not taken into account when calculating the probability of success of

is fixed and hence is not taken into account when calculating the probability of success of ![]() with respect to

with respect to ![]() . On the other hand, in the RO model,

. On the other hand, in the RO model, ![]() is random and so is taken into accounting when computing the probability. Since we cannot be certain that the security result would hold for a particular value of

is random and so is taken into accounting when computing the probability. Since we cannot be certain that the security result would hold for a particular value of ![]() , we cannot extend the security implication in the RO model to the standard model.

, we cannot extend the security implication in the RO model to the standard model.

5. Probabilistic Turing machines

The following is a very brief overview of non-deterministic and probabilistic Turing machines. The objective is not to present a detailed analysis of this topic, but rather to gain enough insight and intuition to understand the nature of probabilistic algorithms in digital signature schemes and malevolent adversaries. Most of the material presented here is based on [5].

A Turing machine is a theoretical computer science construct. It can be thought of as a machine or a computer that follows pre-defined rules to read and write symbols (one at a time) chosen from a pre-defined alphabet set. At each time increment, it looks at its current state and the current symbol it is reading. These form its input to the pre-defined set of rules in order to figure out what action to take. As an example, suppose the machine is in state happy with symbol ![]() . The pre-defined rule for this pair of inputs consists in modifying

. The pre-defined rule for this pair of inputs consists in modifying ![]() to

to ![]() , move one step to the left, and update its state to neutral.

, move one step to the left, and update its state to neutral.

A Deterministic Turing Machine ![]() can be formally defined as a 6-tuple in the following way:

can be formally defined as a 6-tuple in the following way:

is the universe of allowed states which is a finite set.

is the universe of allowed states which is a finite set. is the universe of allowed symbols or alphabet which is also a finite set.

is the universe of allowed symbols or alphabet which is also a finite set. is an element of the state-space

is an element of the state-space  that refers to the initial state.

that refers to the initial state. is an element of the alphabet

is an element of the alphabet  that denotes the blank symbol.

that denotes the blank symbol. is a subset of the alphabet

is a subset of the alphabet  that contains the allowed final states.

that contains the allowed final states. is a function

is a function  which takes as input the current state (which must not be an element of the final state set), and the current symbol. It maps them to a well-defined 3-tuple that includes a new state, a new symbol, and a tape movement. Here the tape can either move to the left, right or stay put.

which takes as input the current state (which must not be an element of the final state set), and the current symbol. It maps them to a well-defined 3-tuple that includes a new state, a new symbol, and a tape movement. Here the tape can either move to the left, right or stay put.

A Non-Deterministic Turing machine can be defined in exactly the same way as its deterministic counterpart with one exception. The relation ![]() is no longer a function but rather a transition relation that allows an input to be mapped to more than just one output. The transition relation

is no longer a function but rather a transition relation that allows an input to be mapped to more than just one output. The transition relation ![]() is defined a subset of the following cross product:

is defined a subset of the following cross product:

The question that remains is how to decide which output to choose if there are many allowed per input. One way of resolving it is by choosing an output drawn from some probability distribution over the range of allowed outputs. This is how a Probabilistic Turing Machine operates. We associate with it a random tape ![]() that encapsulates the probability distribution used by the transition relation

that encapsulates the probability distribution used by the transition relation ![]() . In subsequent sections, we model hypothetical adversaries as probabilistic polynomial-time Turing machines (PPT for short) with random tape

. In subsequent sections, we model hypothetical adversaries as probabilistic polynomial-time Turing machines (PPT for short) with random tape ![]() . We write

. We write ![]() .

.

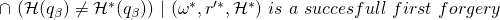

6. The reduction model

Recall that proving security of a signature scheme requires proving resilience against forgery. By that we mean resilience against existential forgery with adaptively chosen-message attack or EFACM. In this setting, ![]() can have access to RO as well as to

can have access to RO as well as to ![]() (the signing algorithm of a given user). However,

(the signing algorithm of a given user). However, ![]() does not have access to any user’s private key. So

does not have access to any user’s private key. So ![]() can send any message

can send any message ![]() to

to ![]() and receive a signature on

and receive a signature on ![]() as if it were generated by the given user. The objective of

as if it were generated by the given user. The objective of ![]() is to be able to create its own signature forgery based on all the queries that it sent to RO and to

is to be able to create its own signature forgery based on all the queries that it sent to RO and to ![]() . Clearly,

. Clearly, ![]() cannot just regurgitate a signature that was created by

cannot just regurgitate a signature that was created by ![]() during one of its earlier queries. It has to create its own. We are then faced with the question of how to approach the problem of proving resilience against EFACM. The method we follow was introduced by [4]. The idea is to establish a logical connection between a successful EFACM attack and breaking a hard computational problem (usually a discrete logarithm over some cyclic group). As such, it is considered a conditional proof (i.e., conditional on the intractability of another problem).

during one of its earlier queries. It has to create its own. We are then faced with the question of how to approach the problem of proving resilience against EFACM. The method we follow was introduced by [4]. The idea is to establish a logical connection between a successful EFACM attack and breaking a hard computational problem (usually a discrete logarithm over some cyclic group). As such, it is considered a conditional proof (i.e., conditional on the intractability of another problem).

To better understand the logic supporting such proofs, let’s take another look at the structure of a digital signature scheme. The schemes that we consider have a common skeletal structure. The signing algorithm ![]() creates 3 types of parameters that go into building its output signature. The 3 types are:

creates 3 types of parameters that go into building its output signature. The 3 types are:

- One or more randomly chosen parameters, say

. These are generated in accordance with

. These are generated in accordance with  ‘s random tape

‘s random tape  (because

(because  is non-deterministic, we model it as a PPT Turing machine with a random tape).

is non-deterministic, we model it as a PPT Turing machine with a random tape). - One or more outputs of RO on queries that are themselves functions of any of the following:

- A subset of the random parameters, say

.

. - The message

to be signed.

to be signed. - Some other public data, say

(e.g., involving the public key(s) of the signer or a ring of signers as we will see when we introduce ring signature schemes).

(e.g., involving the public key(s) of the signer or a ring of signers as we will see when we introduce ring signature schemes). - The secret key(s) of the signer (or a ring of signers), say

.

The output of query

.

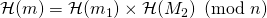

The output of query to the RO can then be represented by

to the RO can then be represented by ![Rendered by QuickLaTeX.com \mathcal{H}[f_{i}(R_{i}, m, P_{i}, X_{i})]](https://delfr.com/wp-content/ql-cache/quicklatex.com-0424186b0d2388cfab77aa95675c9071_l3.png) , where

, where  is some pre-defined function on the relevant parameters, and here

is some pre-defined function on the relevant parameters, and here  indicates a specific instance of an input parameters.

indicates a specific instance of an input parameters.

- A subset of the random parameters, say

- One or more elements that are completely determined by: 1) A subset of the secret key(s) of the signer (or a ring of signers), 2) An element of the first type as described above, and 3) An element of the second type as described above. Call them

.

.

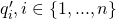

For example, we can easily identify the non-interactive version of the Schnorr‘s signature scheme with this structure. Indeed, the signer randomly chooses a value ![]() known as a commitment and computes

known as a commitment and computes ![]() , where

, where ![]() is a generator of the group. Then the challenge is calculated as

is a generator of the group. Then the challenge is calculated as ![]() . Finally

. Finally ![]() is calculated as

is calculated as ![]() , where

, where ![]() denotes the signer’s private key. So we can see that:

denotes the signer’s private key. So we can see that:

- Schnorr has a single random parameter:

.

. - It has a single function

such that

such that

![Rendered by QuickLaTeX.com \mathcal{H}[f_{1}(R_{1}, m, P_{1}, X_{1})] \equiv \mathcal{H}(r_{1},m) = \mathcal{H}(g^{k},m)](https://delfr.com/wp-content/ql-cache/quicklatex.com-0e2728f09ca67a4439ace8fc8718b700_l3.png) And so

And so and

and  .

. - It has a single fully determined parameter given by

.

.

Any valid signature ![]() must pass the test of the verification algorithm

must pass the test of the verification algorithm ![]() . In general, the verifier will conduct a number of queries (say a total of

. In general, the verifier will conduct a number of queries (say a total of ![]() queries) to RO (or to the hash function in the case of the standard model) and use them to check if a certain relationship holds among some of the signature outputs. In the signature schemes that we consider in this series, we can always identify an equation of the form

queries) to RO (or to the hash function in the case of the standard model) and use them to check if a certain relationship holds among some of the signature outputs. In the signature schemes that we consider in this series, we can always identify an equation of the form ![]() where

where

is a generator of the underlying multiplicative cyclic group of the signature scheme.

is a generator of the underlying multiplicative cyclic group of the signature scheme. is a quantity calculated by

is a quantity calculated by  that depends on a subset of the signature components, a subset of the

that depends on a subset of the signature components, a subset of the  queries that

queries that  sends to RO, and a subset of the RO replies to the

sends to RO, and a subset of the RO replies to the  queries.

queries. is a quantity calculated by

is a quantity calculated by  that depends on a subset of the signature components, a subset of the

that depends on a subset of the signature components, a subset of the  queries that

queries that  sends to RO, and a subset of the RO replies to the

sends to RO, and a subset of the RO replies to the  queries that includes the reply

queries that includes the reply  to the last query

to the last query  .

. is a quantity calculated by

is a quantity calculated by  that depends on a subset of the signature components, a subset of the

that depends on a subset of the signature components, a subset of the  queries that

queries that  sends to RO, and a subset of the RO replies to the

sends to RO, and a subset of the RO replies to the  queries, that does not including the reply

queries, that does not including the reply  to the last query

to the last query  .

.

The logic that we follow to prove that a scheme is resilient against EFACM in the RO model, consists in being able to solve for ![]() in case the scheme were not resilient. That would imply the existence of an adversary

in case the scheme were not resilient. That would imply the existence of an adversary ![]() that is able to solve the DL problem on some group. Such a result would contradict the presumed intractability of DL, leading us to conclude that the likelihood of a forgery is negligible.

that is able to solve the DL problem on some group. Such a result would contradict the presumed intractability of DL, leading us to conclude that the likelihood of a forgery is negligible.

The question becomes one of linking EFACM with extracting ![]() . Note that if

. Note that if ![]() were known, one could use

were known, one could use ![]() to calculate what the secret key

to calculate what the secret key ![]() is. However, since the DL problem is assumed to be intractable, finding

is. However, since the DL problem is assumed to be intractable, finding ![]() would be hard. One would need another linear relationship in

would be hard. One would need another linear relationship in ![]() to solve for the secret key.

to solve for the secret key.

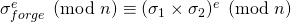

Suppose a given scheme were not resilient against EFACM, and let ![]() be a forgery. We would then have an equation of the form

be a forgery. We would then have an equation of the form ![]() . Suppose it can be domonstrated that if the scheme is successfull in generating an initial forgery, then we could replay the attack to generate a second forgery

. Suppose it can be domonstrated that if the scheme is successfull in generating an initial forgery, then we could replay the attack to generate a second forgery ![]() . We also require that the replay of the attack satisfies the following:

. We also require that the replay of the attack satisfies the following:

- The second instance of the experiment that generates the second forgery has the same random elements as the first instance of the experiment. Note that there are 2 sources of randomness: one from the random tape

of the adversary

of the adversary  and another from the random tape

and another from the random tape  of the signer’s signing algorithm

of the signer’s signing algorithm  . Here we impose the constraint that the 2 random tapes are maintained between the first and the second instance.

. Here we impose the constraint that the 2 random tapes are maintained between the first and the second instance. - The queries and the replies that

sends and receives from RO are the same in the 2 instances, except for the reply on the last query

sends and receives from RO are the same in the 2 instances, except for the reply on the last query  .

.

Under these circumstances, we show when we analyze the different schemes that we can get an equation of the form ![]() associated with the second forgery. The important thing to note is that since

associated with the second forgery. The important thing to note is that since ![]() and

and ![]() depend on the reply of RO to the

depend on the reply of RO to the ![]() query (which is different for the 2 forgery instances), they will be different from each other. The 2 relationships can then be used to solve for

query (which is different for the 2 forgery instances), they will be different from each other. The 2 relationships can then be used to solve for ![]() and solve the DL problem.

and solve the DL problem.

The reduction model that we are building strives to achieve the above 2 conditions. Let’s formalize them. Suppose that an adversary ![]() has a non-negligible probability of success in EFACM. As we saw in the RO section previously, this means that:

has a non-negligible probability of success in EFACM. As we saw in the RO section previously, this means that:

A successful forgery corresponds then to a tuple ![]() that allows

that allows ![]() to issue a valid forged signature. It is important to note that

to issue a valid forged signature. It is important to note that ![]() is not a random function anymore but rather a fixed one that took its values after running the first forgery instance. Hence

is not a random function anymore but rather a fixed one that took its values after running the first forgery instance. Hence ![]() is a particular instance of

is a particular instance of ![]() . The 2 constraints regarding the issuance of a second forgery by adversary

. The 2 constraints regarding the issuance of a second forgery by adversary ![]() can be summarized in the following equation:

can be summarized in the following equation:

which we can re-write as

A successful second forgery would then correspond to a tuple ![]() where here too,

where here too, ![]() is a particular instance of

is a particular instance of ![]() and is not a random function anymore.

and is not a random function anymore.

To make sure that the second time we run the forgery experiment, we use the same random elements that were generated in the first instance, we need to make sure that we use the same tape ![]() for the adversary as well as the same tape

for the adversary as well as the same tape ![]() for

for ![]() . Making sure that we use the same

. Making sure that we use the same ![]() is easy since the adversary can record the tape that it used in the first instance and apply it again in the second.

is easy since the adversary can record the tape that it used in the first instance and apply it again in the second.

The situation is more difficult with replicating the random tape of ![]() . This is because,

. This is because, ![]() is not controlled by

is not controlled by ![]() and will never collude with it to allow it to forge a signature.

and will never collude with it to allow it to forge a signature. ![]() will always act honestly and generate new random elements for every instance of an experiment. No constraints can be imposed on its random tape

will always act honestly and generate new random elements for every instance of an experiment. No constraints can be imposed on its random tape ![]() . This calls for the creation of a new entity

. This calls for the creation of a new entity ![]() that we refer to as a simulator with random tape

that we refer to as a simulator with random tape ![]() .

. ![]() would be under the control of

would be under the control of ![]() and hence its random tape

and hence its random tape ![]() could be replayed. Clearly,

could be replayed. Clearly, ![]() will not have access to any secret key, which is the main difference with

will not have access to any secret key, which is the main difference with ![]() . Equally important, is that

. Equally important, is that ![]() must satisfy the following:

must satisfy the following:

If this is satsified, then we would have

One way to ensure this equality is by:

- Making sure that

and

and  have the same range (i.e., they output signatures taken from the same pool of potential signatures over all possible choices of RO functions and respective random tapes

have the same range (i.e., they output signatures taken from the same pool of potential signatures over all possible choices of RO functions and respective random tapes  and

and  ).

).  and

and  have indistinguishable probability distribution over this range.(Refer to [4] for a definition of indistinguishable distributions). In our case we assume statistical indistinguishability. This means that if we let

have indistinguishable probability distribution over this range.(Refer to [4] for a definition of indistinguishable distributions). In our case we assume statistical indistinguishability. This means that if we let  be an

be an  -tuple of independent signatures, then:

-tuple of independent signatures, then:

1 and 2 above allow us to write:

![]() also needs to issue valid signatures that pass the verification test. The last step of a verification algorithm is to check whether a certain relationship holds or not. In order to compute the elements for this relationship test, the verifier calculates a number of intermediary values. Intermediary value

also needs to issue valid signatures that pass the verification test. The last step of a verification algorithm is to check whether a certain relationship holds or not. In order to compute the elements for this relationship test, the verifier calculates a number of intermediary values. Intermediary value ![]() is usually a function of the form:

is usually a function of the form:

where ![]() is a subset of the random parameters generated by the signing algorithm,

is a subset of the random parameters generated by the signing algorithm, ![]() is a set of public information including the signer’s public key,

is a set of public information including the signer’s public key, ![]() is s the message, and

is s the message, and ![]() is the

is the ![]() query sent by the verifier to RO.

query sent by the verifier to RO.

For the final verification test to be passed, each of these equations must evaluate to a determined value. Any deviation, would result in a failed verification. The signing algorithm ![]() uses its knowledge of the signer’s secret key to enforce a correct evaluation. However,

uses its knowledge of the signer’s secret key to enforce a correct evaluation. However, ![]() does not have access to the appropriate secret key. And so to make sure that the verification test is satisfied, it will conduct its own random assignment to what otherwise would be calls to RO. We refer to this process as back-patching. This methodology guarantees valid signatures, however it requires that

does not have access to the appropriate secret key. And so to make sure that the verification test is satisfied, it will conduct its own random assignment to what otherwise would be calls to RO. We refer to this process as back-patching. This methodology guarantees valid signatures, however it requires that ![]() bypasses RO. From the perspective of

bypasses RO. From the perspective of ![]() , these assignments are random and it has no way of telling whether they were generated by RO or by another random process. This will not compromise the execution of an experiment as long as the following 2 situations are avoided:

, these assignments are random and it has no way of telling whether they were generated by RO or by another random process. This will not compromise the execution of an experiment as long as the following 2 situations are avoided:

- One of the queries that

does its own assignment for (i.e., bypassing RO), gets also queried by

does its own assignment for (i.e., bypassing RO), gets also queried by  directly to RO during execution. Odds are the 2 values assigned by

directly to RO during execution. Odds are the 2 values assigned by  and by RO will not match. And so with overwhelming probability, the execution of

and by RO will not match. And so with overwhelming probability, the execution of  will halt. We call this a collision of type 1.

will halt. We call this a collision of type 1. - Suppose

asks

asks  to sign a certain message

to sign a certain message  . As part of this process,

. As part of this process,  randomly assigns a value to relevant queries

randomly assigns a value to relevant queries  (for some

(for some  ). Suppose that at a later time instance,

). Suppose that at a later time instance,  asks

asks  to sign some other message

to sign some other message  (it could be equal to

(it could be equal to  ). Here again,

). Here again,  randomly assigns a value to each of the relevant queries

randomly assigns a value to each of the relevant queries  . A problem would arise if

. A problem would arise if  for some

for some  , because the 2 random assignments that

, because the 2 random assignments that  would issue for these 2 queries will be different with overwhelming probability, and the execution of

would issue for these 2 queries will be different with overwhelming probability, and the execution of  will halt. We call this a collision of type 2.

will halt. We call this a collision of type 2.

If the probability of occurence of these types of collisions is negligible, the simulator ![]() can safely do its own random assignment to queries appearing in the verification algorithm without meaningfully affecting the execution. We are then justified in dropping the dependence of

can safely do its own random assignment to queries appearing in the verification algorithm without meaningfully affecting the execution. We are then justified in dropping the dependence of ![]() on

on ![]() and simply write

and simply write ![]() .

.

We close this section with a summary of the outline that we follow in the future to prove resilience against EFACM in the RO model:

- The first step is to assume that there exists a PPT adversary

successful in EFACM in the RO model. In other terms, we assume that:

successful in EFACM in the RO model. In other terms, we assume that:

![Rendered by QuickLaTeX.com P_{\omega,r, \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \Sigma^{\mathcal{H}}(r)}\ succeeds\ in\ EFACM] = \epsilon(k)](https://delfr.com/wp-content/ql-cache/quicklatex.com-501901e984d4c055f0e4e10186fe6ac5_l3.png)

for

non-negligible in k.

non-negligible in k. - The second step is to build a simulator

such that it:

such that it:

- Does not have access to the private key of any signer.

- Has the same range as

(i.e., they output signatures taken from the same pool of potential signatures over all possible choices of RO functions and respective random tapes

(i.e., they output signatures taken from the same pool of potential signatures over all possible choices of RO functions and respective random tapes  and

and  ).

). - Has indistinguishable probability distribution from that of

over this range.

over this range.

Step 1 and the construction in Step 2 imply that:

![Rendered by QuickLaTeX.com P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')}\ succeeds\ in\ EFACM] = \epsilon(k)](https://delfr.com/wp-content/ql-cache/quicklatex.com-5f50a555c04577b6e06544f5bcc52656_l3.png)

for

non-negligible in k.

non-negligible in k. - The third step is to show that

![Rendered by QuickLaTeX.com P[Col] = \delta(k)](https://delfr.com/wp-content/ql-cache/quicklatex.com-0d51748b3cf319d5594d3f5305eececd_l3.png) , where

, where  is negligibe in k. Col refers to Collisions of type 1 or 2. This allows us to write:

is negligibe in k. Col refers to Collisions of type 1 or 2. This allows us to write:

![Rendered by QuickLaTeX.com P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')} succeeds\ in\ EFACM]](https://delfr.com/wp-content/ql-cache/quicklatex.com-cb68a3a35088d13d8bae75ac81dc76f8_l3.png)

![Rendered by QuickLaTeX.com =P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')} succeeds\ in\ EFACM | Col]\ \times P[Col]](https://delfr.com/wp-content/ql-cache/quicklatex.com-a47f492e585eb58f206a07400cd01d15_l3.png)

![Rendered by QuickLaTeX.com + P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')} succeeds\ in\ EFACM | \overline{Col}]\ \times P[\overline{Col}]](https://delfr.com/wp-content/ql-cache/quicklatex.com-206b98d2206a46a7ff0fca78babe5587_l3.png)

![Rendered by QuickLaTeX.com \leq P[Col] + P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')} succeeds\ in\ EFACM | \overline{Col}]\ \times P[\overline{Col}]](https://delfr.com/wp-content/ql-cache/quicklatex.com-e3fb20c33dd8443d95d258a91b2e9394_l3.png)

![Rendered by QuickLaTeX.com = \delta(k) + P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')} succeeds\ in\ EFACM | \overline{Col}] \times(1-\delta(k))](https://delfr.com/wp-content/ql-cache/quicklatex.com-34abe4e8c8704b3c41f5e697a220625e_l3.png)

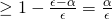

And so we can conclude that:

![Rendered by QuickLaTeX.com P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')} succeeds\ in\ EFACM | \overline{Col}] \geq \frac{\epsilon(k) - \delta(k)}{1-\delta(k)}](https://delfr.com/wp-content/ql-cache/quicklatex.com-e335ac56e1f0ff14c46634db0c6748c0_l3.png)

which is non-negligible in

. And hence, that:

. And hence, that:![Rendered by QuickLaTeX.com P_{\omega,r,' \mathcal{H}}[\mathcal{A}(\omega)^{\mathcal{H}, \mathcal{S}(r')} succeeds\ in\ EFACM\ \cap \overline{Col}] \geq \epsilon(k) - \delta(k)](https://delfr.com/wp-content/ql-cache/quicklatex.com-d228e25e0d1e6c099456a7b4304f1c09_l3.png)

which is non-negligible in

- The fourth step is to prove that if

is a successful tuple that generated a first EFACM forgery, then the following is non negligible in

is a successful tuple that generated a first EFACM forgery, then the following is non negligible in  :

:

![Rendered by QuickLaTeX.com and\ (\mathcal{H}(q_{i}) = \mathcal{H}^{*}(q_{i}))\ for\ i \in \{{1,...\beta -1\}}] \equiv \epsilon'(k)](https://delfr.com/wp-content/ql-cache/quicklatex.com-9bffc91c2dfb0a4f4f9ee28a24637f9a_l3.png)

From this we can obtain a second successful forgery

such that

such that  and such that

and such that  .

.

In order to prove Step 4, we will make use of the splitting lemma introduced in [4] that we describe in the following section. - The fifth and last step is to use the 2 forgeries obtained earlier to solve an instance of the Discrete Logarithm (DL) problem. This would contradict the intractability of DL on the pre-defined group. This implies that the initial assumption is flawed and that the scheme is resilient against EFACM in the RO model.

7. The Splitting lemma

The following lemma, introduced in [4], will play a crucial role in proving Step 4 previously described.

Splitting lemma:

Let ![]() be a subset of a product space

be a subset of a product space ![]() , where each of

, where each of ![]() and

and ![]() are associated with a certain probability distribution.

are associated with a certain probability distribution.

Let ![]() be such that

be such that ![]() , where

, where ![]() is drawn from the joint distribution over

is drawn from the joint distribution over ![]() and

and ![]() .

.

For any ![]() , we define a new subset of

, we define a new subset of ![]() as follows:

as follows:

![]()

Then the following results hold:

Proof:

- Assume the contrary, i.e.,

![Rendered by QuickLaTeX.com P_{(x,y) \sim (X \times Y)}[(x,y) \in B] < \alpha](https://delfr.com/wp-content/ql-cache/quicklatex.com-1a3bcec690cea8dbcd8a6224f75a7a5a_l3.png) . Then we can write:

. Then we can write:

![Rendered by QuickLaTeX.com \epsilon \leq P_{(x,y) \sim (X \times Y)}[(x,y) \in A]](https://delfr.com/wp-content/ql-cache/quicklatex.com-755a5c1b6efd643fab0d6727359afb98_l3.png)

![Rendered by QuickLaTeX.com =P_{(x,y) \sim (X \times Y)}[(x,y) \in A\ |\ (x,y) \in B] \times P_{(x,y) \sim (X \times Y)}[(x,y) \in B]](https://delfr.com/wp-content/ql-cache/quicklatex.com-02e88faa387c6e2e79800c84bcb56ad7_l3.png)

![Rendered by QuickLaTeX.com +\ P_{(x,y) \sim (X \times Y)}[(x,y) \in A\ |\ (x,y) \in \overline{B}] \times P_{(x,y) \sim (X \times Y)}[(x,y) \in \overline{B}]](https://delfr.com/wp-content/ql-cache/quicklatex.com-9a7d3a2bc529ac9da0d481f06bb2e0cb_l3.png)

![Rendered by QuickLaTeX.com 1 \times \alpha + P_{(x,y) \sim (X \times Y)}[(x,y) \in A\ |\ (x,y) \in \overline{B}]\ \times 1](https://delfr.com/wp-content/ql-cache/quicklatex.com-f0951f70485f1afc7baa71fd740baa21_l3.png)

where the last inequality is derived from the fact that by construction of

we have

we have  implies that

implies that ![Rendered by QuickLaTeX.com P_{(x,y) \sim (X \times Y)}[(x,y) \in A]\ < \epsilon - \alpha](https://delfr.com/wp-content/ql-cache/quicklatex.com-e6d566710006e39f58bed61ce3ed599f_l3.png)

So

, which is a contradiction.

, which is a contradiction. - This result is a direct consequence of the definition of

- Baye’s rule gives:

![Rendered by QuickLaTeX.com P_{(x,y) \sim (X \times Y)}[(x,y) \in B\ |\ (x,y) \in A]](https://delfr.com/wp-content/ql-cache/quicklatex.com-fd1e853135c6a575ad6a533910df01a5_l3.png)

![Rendered by QuickLaTeX.com = 1 - P_{(x,y) \sim (X \times Y)}[(x,y) \in \overline B\ |\ (x,y) \in A]](https://delfr.com/wp-content/ql-cache/quicklatex.com-dea5a579d99dd2b66361e2813b1d2219_l3.png)

![Rendered by QuickLaTeX.com = 1 - \frac{P_{(x,y) \sim (X \times Y)}[(x,y) \in A\ |\ (x,y) \in \overline{B}] \times P_{(x,y) \sim (X \times Y)}[(x,y) \in \overline{B}]}{P_{(x,y) \sim (X \times Y)}[(x,y) \in A]}](https://delfr.com/wp-content/ql-cache/quicklatex.com-63003aaba93f670428c14d81e6a1ba85_l3.png)

![Rendered by QuickLaTeX.com \geq 1 - \frac{P_{(x,y) \sim (X \times Y)}[(x,y) \in A\ |\ (x,y) \in \overline{B}]}{P_{(x,y) \sim (X \times Y)}[(x,y) \in A]}](https://delfr.com/wp-content/ql-cache/quicklatex.com-a40fd14f921e8de060ca21fd25fe2729_l3.png)

As an example, let ![]() and let the probability distribution on each of

and let the probability distribution on each of ![]() and

and ![]() be the uniform distribution. Moreover, assume that

be the uniform distribution. Moreover, assume that ![]() and

and ![]() are independent random variables.

are independent random variables.

Let ![]()

Then ![]() . So we let

. So we let ![]() . We also set

. We also set ![]()

Lastly, we set ![]()

Note that if ![]() , then

, then ![]() by construction of

by construction of ![]() . So any

. So any ![]() that is not part of at least one element of

that is not part of at least one element of ![]() , can not be part of any element of

, can not be part of any element of ![]() . We focus next on the remaining

. We focus next on the remaining ![]() values:

values:

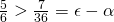

- If

:

:

![Rendered by QuickLaTeX.com P_{y' \sim Y}[(x,y') \in A\ |\ x=1]](https://delfr.com/wp-content/ql-cache/quicklatex.com-c72e7f8d0b9b4fbabe653e927252646b_l3.png)

![Rendered by QuickLaTeX.com = P_{y' \sim Y}[(x,y') \in \{{(1,2),(1,3),(1,4),(1,5),(1,6)\}}] = \frac{5}{6}](https://delfr.com/wp-content/ql-cache/quicklatex.com-8e4b97a35c101021086d584f63426595_l3.png)

And since

, we conclude that the

, we conclude that the  section (i.e., all tuples with

section (i.e., all tuples with  ) are members of

) are members of  .

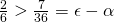

. - If

:

:

![Rendered by QuickLaTeX.com P_{y' \sim Y}[(x,y') \in A\ |\ x=2]](https://delfr.com/wp-content/ql-cache/quicklatex.com-08e3290f6f703c52a9f442eee4c8c9ba_l3.png)

![Rendered by QuickLaTeX.com = P_{y' \sim Y}[(x,y') \in \{{(2,4),(2,5)\}}] = \frac{2}{6}](https://delfr.com/wp-content/ql-cache/quicklatex.com-831ef54d1c07a6f19f301e7760aa054c_l3.png)

And since

, we conclude that the

, we conclude that the  section (i.e., all tuples with

section (i.e., all tuples with  ) are members of

) are members of  .

. - If

:

:

![Rendered by QuickLaTeX.com P_{y' \sim Y}[(x,y') \in A\ |\ x=3]](https://delfr.com/wp-content/ql-cache/quicklatex.com-f5a46c5d143834bbfa08a452a773c95f_l3.png)

![Rendered by QuickLaTeX.com = P_{y' \sim Y}[(x,y') \in \{{(3,6)\}}] = \frac{1}{6}](https://delfr.com/wp-content/ql-cache/quicklatex.com-12a04c9ebb2ca922be4220654435cca2_l3.png)

And since

, we conclude that the

, we conclude that the  section (i.e., all tuples with

section (i.e., all tuples with  ) is not a member of

) is not a member of  .

.

In essence, the splitting lemma states that if a subset ![]() is ‘big enough’ in a given product space, then it is guaranteed to have many ‘big enough’ sections.

is ‘big enough’ in a given product space, then it is guaranteed to have many ‘big enough’ sections.

References

[1] S. Goldwasser, S. Micali, and R. Rivest. A digital signature scheme secure against adaptive chosen-message attacks. SIAM Journal of Computing, pages 281-308,1988.

[2] J. Katz and Y. Lindell. Introduction to Modern Cryptography. CRC Press, 2008.

[3] R. Lidl and H. Niederreiter. Introduction to Finite Fields and their Applications. Cambridge University Press, 1986.

[4] D. Pointcheval and J. Stern. Security arguments for digital signatures and blind signatures. Journal of Cryptology, 2000.

[5] Wikipedia. Non deterministic turing machine.

Tags: Crypto, Hash function, Math, Monero, Privacy, Probabilistic Turing Machine, Random Oracle, Reduction model, Security, Signature, Splitting lemma

No comments

Comments feed for this article

Trackback link: https://delfr.com/digital-signature-prerequisites-monero-part-1-10/trackback/